Amazon, founded in 1994 in Washington, initially started as an online bookstore but has since trans-formed into a global technology company. Headquartered in Seattle, WA, Amazon is widely known for its e-commerce platform, [Amazon.com](http://amazon.com/), and its leadership in cloud computing through Amazon Web Services (AWS) founded in 2006. AWS holds 28% of the cloud computing market and works with over 90% of Fortune 100 companies.

Amazon Web Services (AWS) provides over 200 services that span data storage, computing, machine learning (ML), and artificial intelligence (AI). The AWS Marketplace hosts more than 30,000 cloud solutions and connects third-party sellers with buyers. AWS serves diverse sectors, including government, enterprises, educational institutions, and individuals. It operates in 123 Availability Zones across 39 global regions.

Amazon Redshift plays a foundational role in AWS’s data and analytics portfolio. It is a fully-managed relational data warehouse designed for large-scale data processing. Redshift integrates seamlessly with other AWS services, such as Amazon S3-based data lakes and Amazon SageMaker, enabling users to leverage advanced analytics and machine learning within the same ecosystem. With its columnar storage and massively parallel processing (MPP) architecture, Redshift provides optimized query performance for analytics and reporting.

Amazon Redshift supports efficient workflows in which users can load data from Amazon S3 into Redshift for processing, then deliver query results back to S3. Redshift Spectrum, meanwhile, runs SQL directly on data stored in S3 without loading it into Redshift tables to reduce both data movement and storage costs.

Recent enhancements to Amazon Redshift include the following:

- Expanded zero-ETL integrations: AWS now supports near-real-time replication and filtering from 17 AWS platforms into Redshift.

- Data sharing for data lakes tables: Customers can securely share data at various levels, including tables and views via Redshift and Lake Formation.

- Open lakehouse table support (Iceberg): Redshift users can create and write to Apache Iceberg tables stored in Amazon S3 and Amazon S3 table buckets.

- Automation and self-tuning: Redshift automatically optimizes performance, storage, and resource scaling across clusters and serverless environments with minimal manual tuning.

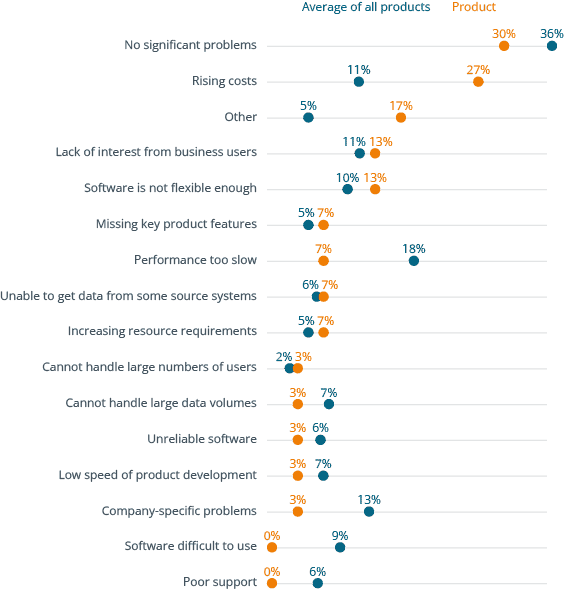

Between 2025 and 2026, buyers of Amazon Redshift shifted from emphasizing functional fit and ease of use toward prioritizing cost/performance, connectivity, and architectural fit. This reflects rising overall interest in FinOps for cloud cost governance and integration with Apache Iceberg for open table formats. In 2026, “lack of interest from business users” remained a top problem and concerns about pricing and complexity remained higher than the overall survey average. While user concerns about enterprise readiness declined, a lack of product “know-how” soared to become the top problem at 37% of Redshift users–nearly twice the overall average. This indicates a need for AWS to invest more in product training for Redshift users.

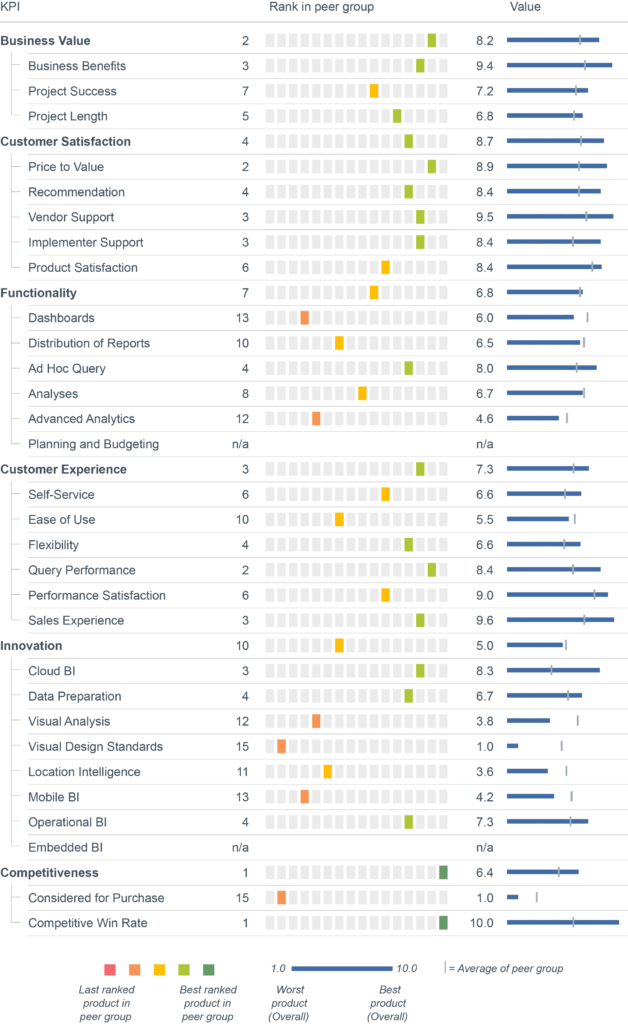

Redshift’s primary sustainable advantage seems to be AWS’ overall incumbent position in cloud computing and native integration with the AWS tool ecosystem. The product itself shows weakness. Between 2025 and 2026 Redshift’s peer group rankings worsened and came in below average for most KPIs. Redshift fell from a #1 Adaptability score to average. Its Project Length slipped from average to dead last, and Technical Foundation went from average to below average. User Experience, meanwhile, fell from above average to below average.

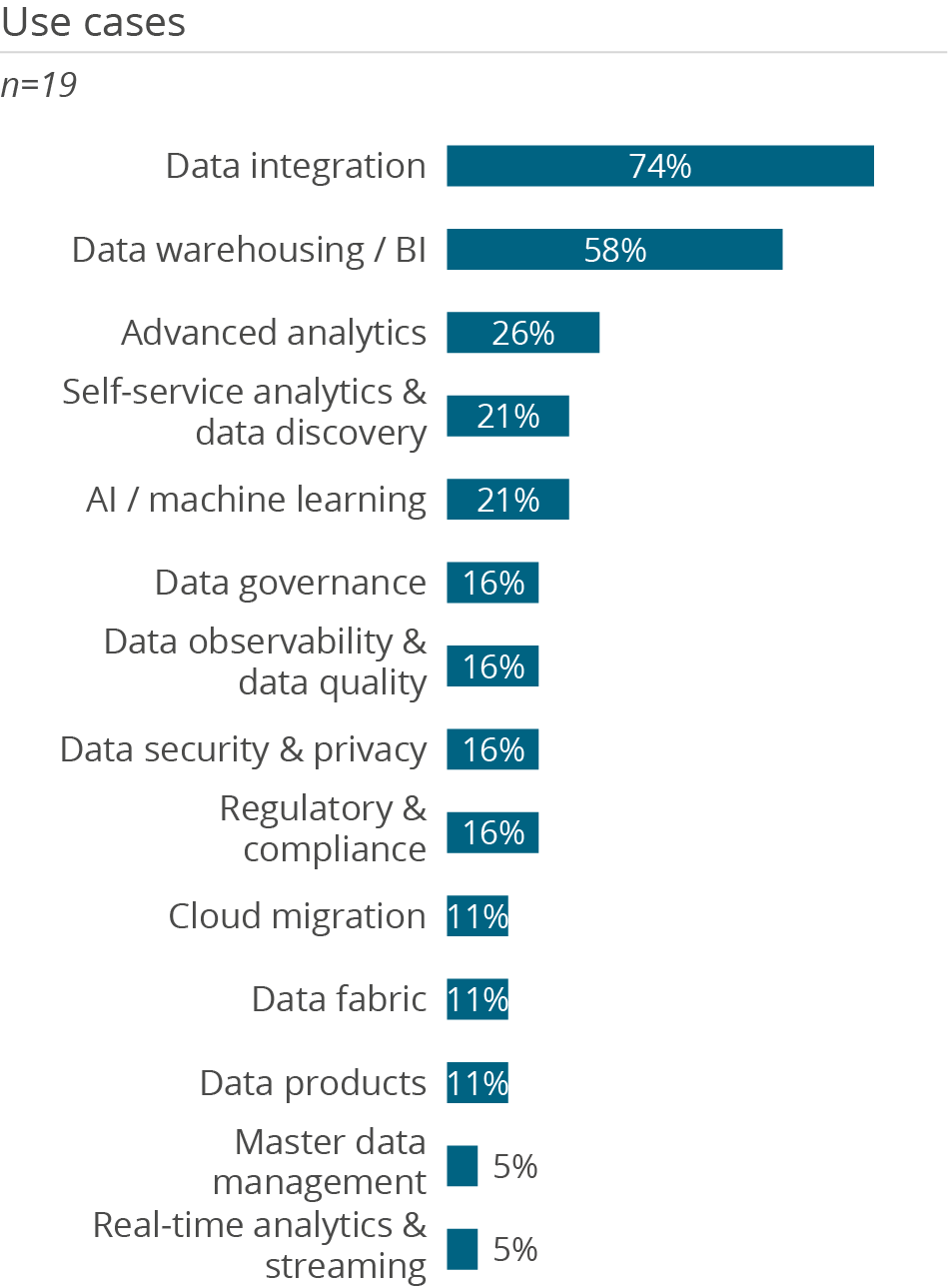

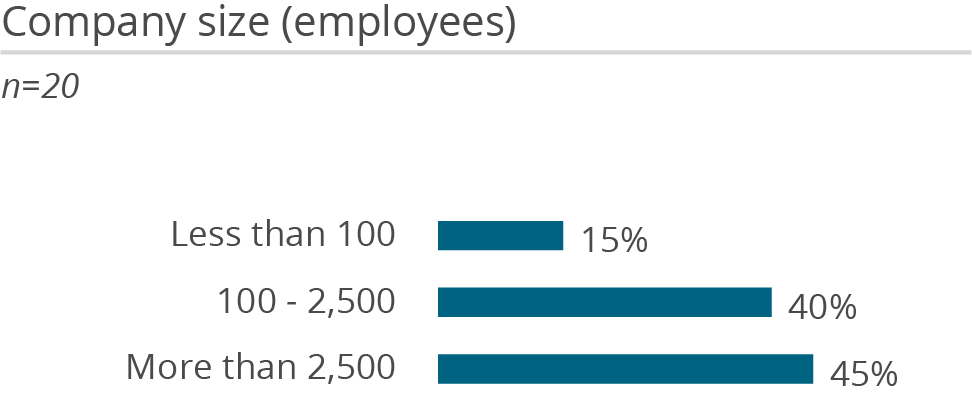

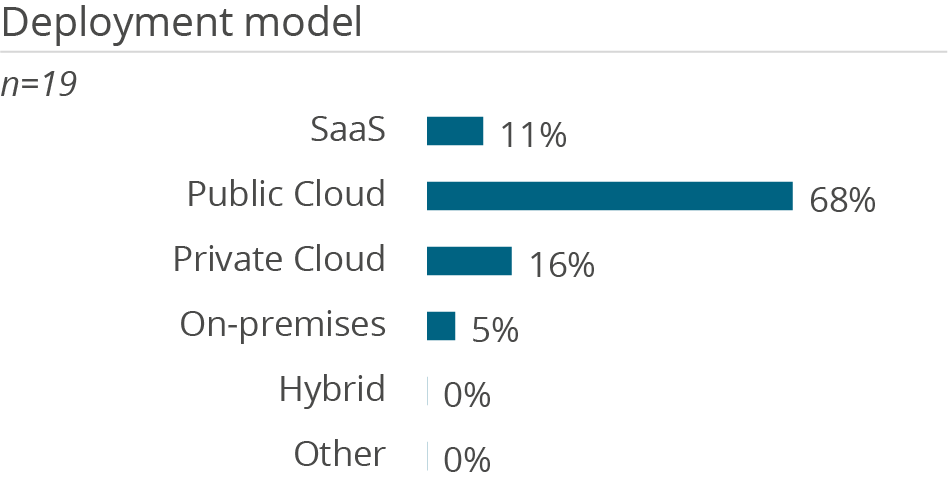

Amazon Redshift is best suited for cloud-native and cloud-first enterprises that are already standardized on AWS and want a tightly integrated analytics platform along with an ecosystem of advanced AI tools. The primary buyers are heads of data engineering, cloud architects, and analytics platform leaders who focus on balancing performance, scalability, and cost governance. Common use cases include enterprise data warehousing, lakehouse architectures with Apache Iceberg, and near-real-time ingestion from AWS operational systems. Ideal use cases also include powering BI, SQL analytics, and machine learning workloads alongside services such as Amazon S3 and Amazon SageMaker. While AWS supports data sourced from on-premises systems, these hybrid environments are less well suited for its cloud-native offerings.